“International Internet traffic growth accelerated to 79 percent in 2009, up from 61 percent in 2008 …”

- — TeleGeography, September 2009

This statement is accompanied by many other statements and research which show that year over year, the amount of Internet traffic has grown and will continue to grow. These trends are facilitated by the continuous growth of smartphone usage and the recent introduction of the iPad.

This growth in Internet traffic means that most websites today are expected to see more traffic and more users coming into their data centers and networks than they currently serve. While this is great for business, many data centers are not designed to effectively manage this ongoing growth in traffic usage. This is due to two main facts:

- Such data centers still use primarily physical servers, making it hard on them to easily and simply scale and grow in accordance with the growth in traffic capacities.

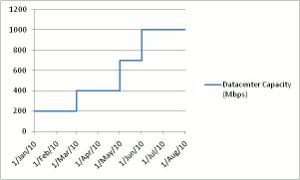

- Such data centers are designed to handle “x” amount of traffic and users, usually with a certain percentage — on top of that — for peak times. This means they “grow” over time in a graded fashion; where at certain points of the data center’s lifecycle, more physical network resources (hardware-based servers) are added to boost capacity.

An example of the data center lifecycle can be seen in Figure 1.

Over and Under

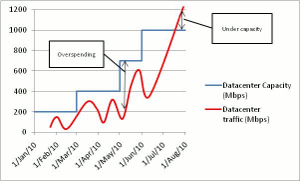

While the graded approach to data center design is more straightforward from an architecture point of view, it has two main drawbacks which derive from the fact that traffic patterns do not follow such graded trends, but rather can be high or low at any moment in time, like a sine wave (see Figure 2).

Theses drawbacks include:

- Potential overspending on network infrastructure

- The risk of being under capacity

Overspending will occur whenever the network infrastructure has more capacity than what is actually needed. However, the second drawback is worse, since being under capacity means there are not enough resources to manage all users, which means loss of business.

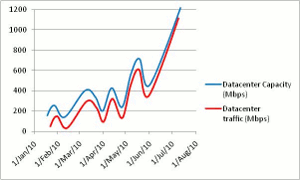

It is clear that a different type of data center network design is needed, one which will be elastic and dynamic enough to follow the requirements of actual usage, rather than future usage.

Designing such a data center network means being able to add computing resources on demand according to an application need. So if a peak in traffic occurs, the data center network will adapt and add more resources, and once the peak is over, these resources are removed.

Note: for this approach to work, the data center network should implement server virtualization technologies to replace the physical architecture (where needed), since it is much easier to provision virtual server resources than physical server resources.

Auto or Manual?

However, implementing virtualization technologies is only half of the story, and this is where a smart, flexible and adaptive application delivery infrastructure comes into play. The importance of having such an application delivery infrastructure is that without it, the data center will still be managed manually rather than automatically.

That means computing resources will have to be added manually by the IT manager and the application delivery infrastructure will have to be manually updated with every change.

A truly smart, flexible and adaptive application delivery infrastructure has to provide a layer of capabilities that together will allow the IT manager to create a truly dynamic, flexible and adaptive data center that can meet both current and future capacity needs. These capabilities include the following:

- The ability to adapt — in real time — to changes in the application/user traffic and add/remove virtual server resources on demand, thus ensuring business applications get the resources they need;

- Monitor application and user performance, and automatically respond if degradation occurs and more resources are needed;

- Automatically update the configuration of the application delivery infrastructure with any change made to the virtual server infrastructure;

- Easily scale the application delivery infrastructure on demand (without a forklift upgrade) as demand for more services and capacity is required. This on-demand scaling should occur throughout the network — at the application delivery (server load balancer) level, the WAN link optimization level, as well as at the security level;

- Easily enlarge the WAN connectivity infrastructure on-demand to accommodate growth in WAN bandwidth and usage.

As can be seen, the aforementioned layer of capabilities allow for automatic adaptation of the changes in either the application performance or virtual infrastructure. If needed, they can also scale the application delivery infrastructure itself, thus ensuring the data center network continuously provides the exact capacity needed to serve applications and users at each point in time. An example of such a data center network can be seen in Figure 3.

To summarize, as Internet traffic levels continue to grow, an increasing number of websites will start facing the need to be able to easily accommodate the number of users and traffic accessing their networks.

Only by implementing a smart, flexible and adaptive application delivery infrastructure will that be able to be easily and cost effectively accomplished.

Amir Peles is CTO of Radware.