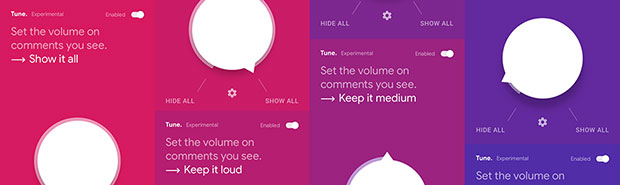

Jigsaw, which is owned by Google parent company Alphabet, on Tuesday released Tune, an experimental Chrome extension that lets users hide comments its algorithms identify as toxic. It is available for Mac, Windows, Linux and the Chrome OS.

Tune builds on the same machine learning (ML) models that power Jigsaw’s Perspective API to rate the toxicity of comments.

Tune users can adjust the volume of comments from zero to anything goes.

Tune is not meant to be a solution for direct targets of harassment, or a solution for all toxicity, noted Jigsaw Product Manager C.J. Adams in an online post. It’s an experiment to show people how ML technology can create new ways to empower people as they read discussions online.

Tune works for English-language comments only, on Facebook, Twitter, YouTube, Reddit and Disqus. Comments in other languages will be shown above and hidden below, a threshold that’s predefined.

Tune’s ML model is experimental, so it still misses some toxic comments and incorrectly hides some nontoxic comments. Developers have been working on the underlying technology, and users can give feedback in the tool to help improve its algorithms.

Fear and Loathing Over Tune

“We have no idea how to police the Internet, so consequently we’re going to turn it over to a robot,” quipped Michael Jude, program manager at Stratecast/Frost & Sullivan.

“You don’t know what it’s doing with the data it collects. You don’t know who built it. You’re giving up the freedom to make your own determinations,” he told TechNewsWorld.

“If one decides to utilize social media, one is tacitly agreeing to access a certain amount of dissent, hate speech, whatever,” Jude pointed out. “As a famous comedian once said, you pays your money and you takes your chances.”

How Tune Works

Tune is part of the Conversation-AI research project and is completely open source, so anyone can examine the code or contribute directly to it on Github.

“The more granular the APIs are and the more open the controls, the better developers can tune the Conversational AI algorithms to generate less false positives and false negatives,” said Ray Wang, principal analyst at Constellation Research.

However, Tune will require an increasing amount of data for fine-tuning. Users have to sign up to provide access to the Perspective API.

Comments read are not associated with users’ accounts or saved by Tune. Instead, they are sent to the Perspective API for scoring and then deleted automatically once the score is returned.

There are two ways of submitting feedback: by clicking About>Feedback, where the account’s user ID is included in the feedback report; or by submitting a correction to how a comment is scored. For corrections, the text of the comment and the user’s answer are stored for training the ML system, but the submission is not linked to the user’s ID.

Free Speech Issues

As with earlier attempts to manage or control online comments, Tune is likely to spark a debate about freedom of speech.

“In the movie Zorro, the Gay Blade, a woman told the military commander of a town who tried to stop her from protesting that the rules of the town were that anyone could say anything they liked in the town square,” Jude recalled.

“The woman is right!” the commander responded. “She can say anything she likes. Arrest anyone who listens.”

Still, “some seem to believe that free speech means you have to listen to what they say,” remarked Rob Enderle, principal analyst at the Enderle Group.

“Even though that’s incorrect, it doesn’t reduce their belief that their own words should be inviolate,” he told TechNewsWorld.

The Tune technology “is a good-faith way to address a real problem, and it will learn and improve over time,” maintained Doug Henschen, principal analyst at Constellation Research.

“Tune puts the control in the hands of the individual,” he told TechNewsWorld. “The comments are still being posted, and therefore aren’t curbed, but users are choosing not to see them. That’s their right.”

With bots and spam factories churning out fake content, Tune “is the answer to taking back some control to enable free speech,” Constellation’s Wang noted.

Tune “lets you get rid of the trolls who have a disproportionate voice,” he said. “It will also curb folks who put out the same content multiple times in comments.”

The algorithms appear to be working well for Google on its sites that accept comments, Wang noted, and “releasing the tech to others is a good first step.”

Tune’s Threat to Social Media

Social media is all about eyeballs, and the more controversial a statement is, the more eyeballs it will draw to a page.

That raises the question of whether the use of Tune might spark a backlash from social media sites.

“I don’t think social media sites would damn their own users for trying to control what they see,” Constellation’s Henschen said.

They might in fact see Tune as helpful, because “content owners do have an obligation to try to address hate speech and the like, but doing so at scale is expensive,” he noted.

Perspective can be fooled through clever use of language, but Google resolved some of the early issues that arose.

However, another group of researchers last summer published a paper that said all proposed detection techniques could be circumvented by adversaries automatically inserting typos, changing word boundaries, or adding innocuous words to speech. They recommended using character-level features rather than word-level features to make ML speech detection models more robust.

The Tune ML model is still immature, so “I expect to see a lot of issues like this crop up,” Enderle said. Once it has matured, however, it “should be able to deal with these efforts to compromise it effectively enough to make gaming the system a waste of time.”