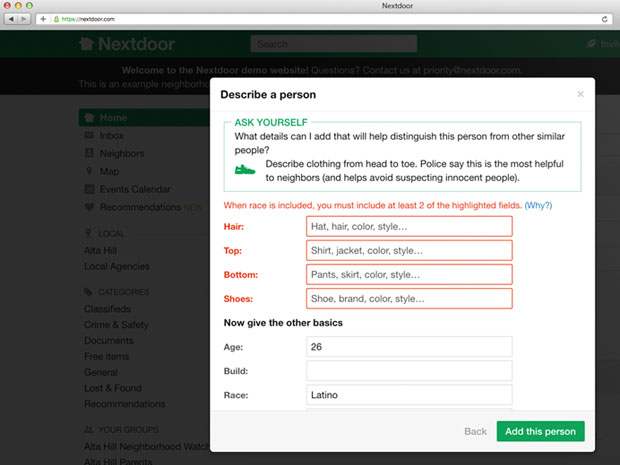

Nextdoor last week rolled out to all 110,000 of its neighborhoods a new form-based process for making crime and safety reports.

Implementation of the tool reduced incidents of racial profiling by 75 percent in areas where it was tested, according to CEO Nirav Tolia.

Nextdoor’s Motivation

Some Nextdoor members had begun using the site to post messages targeting racial minorities, according to reports that began surfacing last year.

For example, users in Oakland, California, frequently posted vague warnings about suspicious activity focusing on black citizens walking down the street, driving a car, or knocking on a door, the East Bay Express reported.

The key principles driving Nextdoor’s approach:

- Define a high bar for racial profiling. Nextdoor worked with community members, law enforcement, and outside experts to develop a definition that makes sense in the neighborhood context;

- Encourage members to stop and think before posting. Members are taken through a multistep process that, among other things, encourages them to question whether disregarding race or ethnicity would affect their assessment of an activity as suspicious;

- Require responsible and useful posting. Members are cautioned against casting suspicion over an entire race or ethnic group;

- Leverage the community to create quick feedback loops. Members are urged to flag instances of racial profiling so they can be removed quickly; and

- Test, learn and improve features. Though it has carried out extensive tests and analyses, Nextdoor is far from done, noted Tolia.

“If Nextdoor.com is holding itself open as a public forum where freedom of speech is the rule, then this sort of screening for racial profiling is actually inappropriate,” suggested Michael Jude, a program manager at Stratecast/Frost & Sullivan.

“This sort of governance implicitly acknowledges that Nextdoor.com is not a public forum where free speech is guaranteed,” he told TechNewsWorld.

Pinning Down Racial Profiling

Because there is no universally accepted definition of the term “racial profiling,” attempts to combat it often are viewed as subjective and tend to stir up emotions.

“If it’s bias against a particular ethnicity, then it’s probably bad,” Jude said, “but if it’s a statement of fact — the guy I saw breaking the window was black, white, brown … then it’s an objective observation.”

That type of description is problematic, according to Nextdoor, because it doesn’t provide sufficient information to make an identification, but it does cast suspicion on every person of the specified gender and race who might be in the neighborhood for legitimate reasons.

Nextdoor is “trying to get this right, but every service is limited by the people that use it,” remarked Rob Enderle, principal analyst at the Enderle Group.

“No service will be able to overcome anyone’s deeply held beliefs,” he told TechNewsWorld.

“I’d push harder for pictures,” Enderle suggested, which “are generally better than any description.”