This week is Siggraph 2022, where Nvidia will be doing one of the keynotes.

While the metaverse on the consumer side is industrial use, outside of gaming which has effectively consisted of metaverse instances for years, Nvidia at Siggraph will be talking about its leadership in integrating AI into the technology, the creation and application of digital twins, and successes at major new robotic factories like the one BMW has created with the help of Siemens.

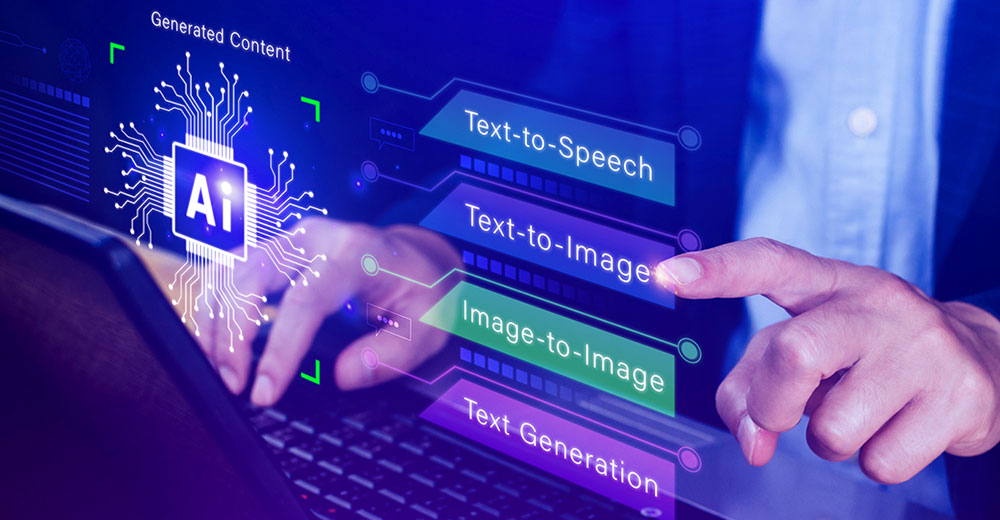

But what I find even more interesting is that as metaverse tools like Nvidia’s Omniverse become more consumer-friendly, the ability to use AI and human digital twins will enable us to create our own worlds where we dictate the rules and where our AI-driven digital twins will emulate real people and animals.

At that point, I expect we’ll need to learn what it means to be gods of the worlds we create, and I doubt we are anywhere near ready, both in terms of the addictive nature of such products and how to create these metaverse virtual worlds in ways that can become the basis for our own digital immortality.

Let’s explore the capabilities of the metaverse this week. Then we’ll close with my product of the week: the Microsoft Surface Duo 2.

Siggraph and the AI-Driven Metaverse

If you’ve participated in a multiplayer video game like Warcraft, you’ve experienced a rudimentary form of the metaverse. You also discovered that objects that do things in the real world — like doors and windows that open, leaves that move with the wind, and people who behave like people — don’t yet exist.

With the introduction of digital twins and physics through tools like Nvidia’s Omniverse, this is changing so that simulations that depend on reality, like those developing autonomous cars and robots, work accurately and assure that potential accidents are reduced without putting humans or real animals at risk because those accidents happen initially in a virtual world.

At Siggraph, Nvidia will be talking about the current capabilities of the metaverse and its near-term future where, for a time, the money and the greatest capabilities will be tied to industrial, not entertainment, use.

For that purpose, the need to make an observer feel like they’re in a real world is, outside of simulations intended to train people, substantially reduced. But training humans is a goal of the simulations, as well, and the creation of human digital twins will be a critical step to our ability to use future AIs to take over an increasing amount of the repetitive and annoying part of our workloads.

It is my belief that the next big breakthrough in human productivity will be the ability of regular people to create digital twins of themselves, which can do an increasing number of tasks autonomously. Auto-Fill is a very rudimentary early milestone on this path that will eventually allow us to create virtual clones of ourselves that can cover for us or even expand our reach significantly.

Nvidia is on the cutting edge of this technology. For anyone wanting to know what the metaverse is capable of today, attending the Siggraph keynote virtually should be on your critical to-do list.

But if we project 20 or so years into the future, given the massive speed of development in this space, our ability to immerse ourselves in the virtual world will increase, as will our ability to create these existences. Worlds where physics as we know it is not only optional but where we can choose to put ourselves into “god mode” and walk through virtual worlds as the ultimate rulers of the virtual spaces we create.

Immersion Is Critical

While we will have intermediate steps using prosthetics that use more advanced forms of haptics to attempt to make us feel like we are immersed in these virtual worlds, it is efforts like Elon Musk’s to create better man-machine interfaces that will make the real difference.

By connecting directly to the brain, we should be able to create experiences that are indistinguishable from the real world and place us in these alternative realities far more realistically.

Meta Reality Labs is researching and developing haptic gloves to bring a sense of touch to the metaverse in the future.

Yet, as we gain the ability to create these worlds ourselves, altering these connections to provide greater reality (experiencing pain in battles, for instance) will be optional, allowing us to walk through encounters as if we were super-powered.

Human Digital Twins

One of the biggest problems with video games is that the NPCs, no matter how good the graphics are, tend to use very limited scripts. They don’t learn, they don’t change, and they are barely more capable than the animatronics at Disneyland.

But with the creation of human digital twins, we will gain the ability to populate the worlds we create with far more realistic citizens.

Imagine being able to license the digital twin you create to present to others and use in the companies or games they create. These NPCs will be based on real people, will have more realistic reactions to changes, will be potentially able to learn and evolve, and won’t be tied to your gender or even your physical form.

How about a talking dragon based on your digital twin, for instance? You could even populate the metaverse world you create with huge numbers of clones that have been altered to look like a diverse population, including animals.

Practical applications will include everything from virtual classrooms with virtual teachers to police and military training with virtual partners against virtual criminals — all based on real people, providing the ability to train with an unlimited number of realistic scenarios.

For example, for a police officer, one of the most difficult things to train for is domestic disturbance. These confrontations can go sideways in all sorts of ways. I know of many instances where the police officer stepped in to protect an abused spouse and then got clocked by that same spouse who decided suddenly to defend her husband from the officer.

Today I read a story about a rookie who approached a legally armed civilian who was on his own property. The officer was nearly shot because he attempted to draw on the civilian who hadn’t broken any laws. That civilian was fully prepared to kill the officer had that happened. The officer was fired for this but could have died.

Being able to train in situations like this virtually can help assure the safety of both the civilian and the officer.

Wrapping Up: God Mode

Anyone who has ever played a game in god mode knows that it really destroys a lot of the value of the game. Yes, you can burn through the game in a fraction of the time, but it is kind of like buying a book and then reading a comprehensive summary with spoilers. Much of the fun of a game is figuring out the puzzles and working through the challenges.

“Westworld” explored what might happen if the virtual people, who were created to emulate humans, figured out they were abused. To be realistic, these creations would need to emulate pain, suffering and the full gambit of emotions, and it is certainly a remote possibility that they might overcome their programming.

However, another possibility is that people fully immersed in god mode may not be able to differentiate between what they can do in a virtual world and a real one. That could result in some nasty behaviors in the real world.

I do think we’ll find there will be a clear delineation between people who want to create viable worlds and treat those worlds beneficially and those that want to create worlds that allow them to explore their twisted fantasies and secret desires.

This might be a way to determine if someone has the right personality to be a leader because abusing power will be so easy to do in a virtual world, and a tendency to abuse power should be a huge red flag for anyone moving into management.

We are likely still decades away from this capability, but we should begin to think about the limitations of using this technology for entertainment so we don’t create a critical mass of people who view others no differently than the virtual people they abuse in the twisted worlds they will create.

What kind of metaverse god will you be?

Surface Duo 2

I’ve been using the Surface Duo 2 for several months now, and it remains my favorite phone. I’m struck by how many people have walked up to me to ask me about the phone and then said, after I’ve shared with them what it does, they want to buy one.

It has huge advantages when consuming emails with attachments or links. The attachment or link opens on the second screen without disrupting the flow of reading the email that was delivered. Same with using the phone when you are opening a website that requires two-factor authentication. The authentication app is on the second screen, so you don’t have to go back and try to locate the screen you were on, again preserving the workflow.

For reading, it reads and holds like a book with two virtual pages, one on each screen. While I thought I might have issues watching videos because of the gap between the screens, I’ve been watching videos on both screens for some time now, and unlike my issues using dual-screen monitors where I find the separation annoying, this gap doesn’t annoy me at all.

Ideally, this phone works best with a headset or a smartwatch that, like the Apple Watch, you can talk and listen to — because holding this form factor to your head is awkward. However, many of us use the speakerphone feature on our smartphones anyhow, and the Surface Duo 2 works fine that way.

In the end, I think this showcases why with a revolutionary device — like the iPhone was, and the Surface Duo 2 is — lots of smart marketing is necessary to make people really understand the advantages of a different design. Otherwise, they won’t get it.

Recall that the iPhone design, which emulated the earlier failed LG Prada phone, was backed by plenty of marketing while the Prada, even though it had an initially stronger luxury brand, was not.

In any case, Microsoft’s Surface Duo 2 remains my favorite smartphone. It’s truly awesome — and my product of the week.